Dataset: Trajectories of people approaching the robot to interact

To reveal the trajectory of people who want talk to the robot, we set up a tracking environment in the "ATC" shopping center in Osaka, Japan, and used it to collect trajectories of people around our robot.

This dataset includes a set of trajectories of both people who intend to initiate interaction with robot and people who do not. (Trajectories of people other then the target person are also included.)

We distinguished two types of situations. We named the situations when a person clearly intended to initiate interaction "Intention to interact", and the situations when a person exhibited some other distinctive intention "Other distinctive intention" (including also situations where the person was not interested in the interaction at first, and started the interaction only after being approached by the robot, see pictures below).

| Trajectories of Intention to interact |

|

Trajectories of Other distinctive intention |

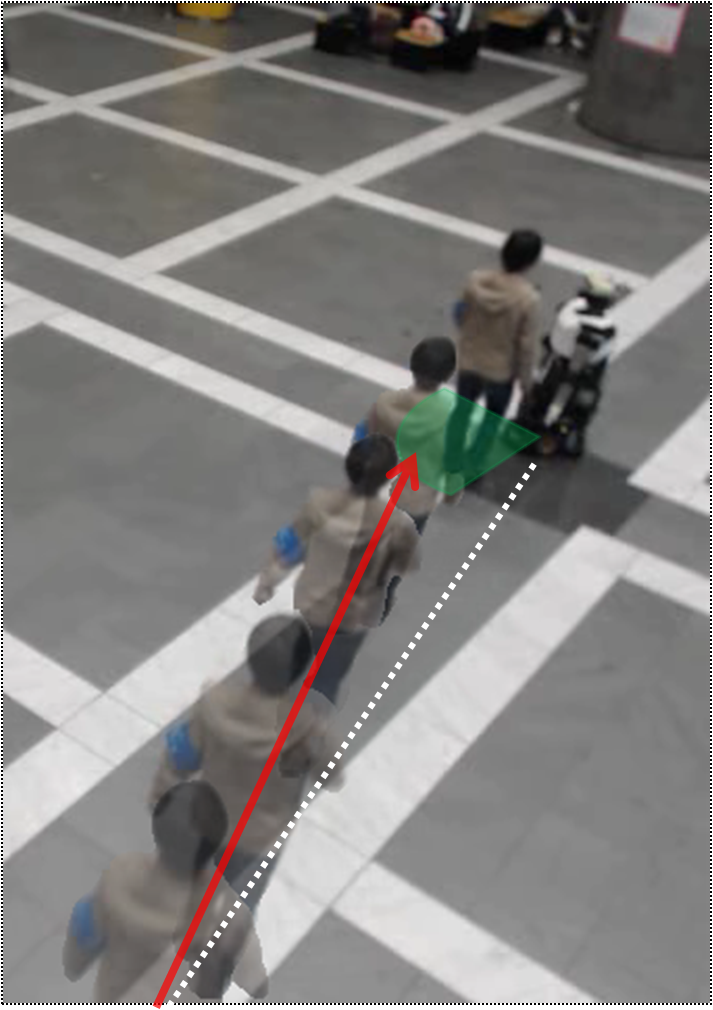

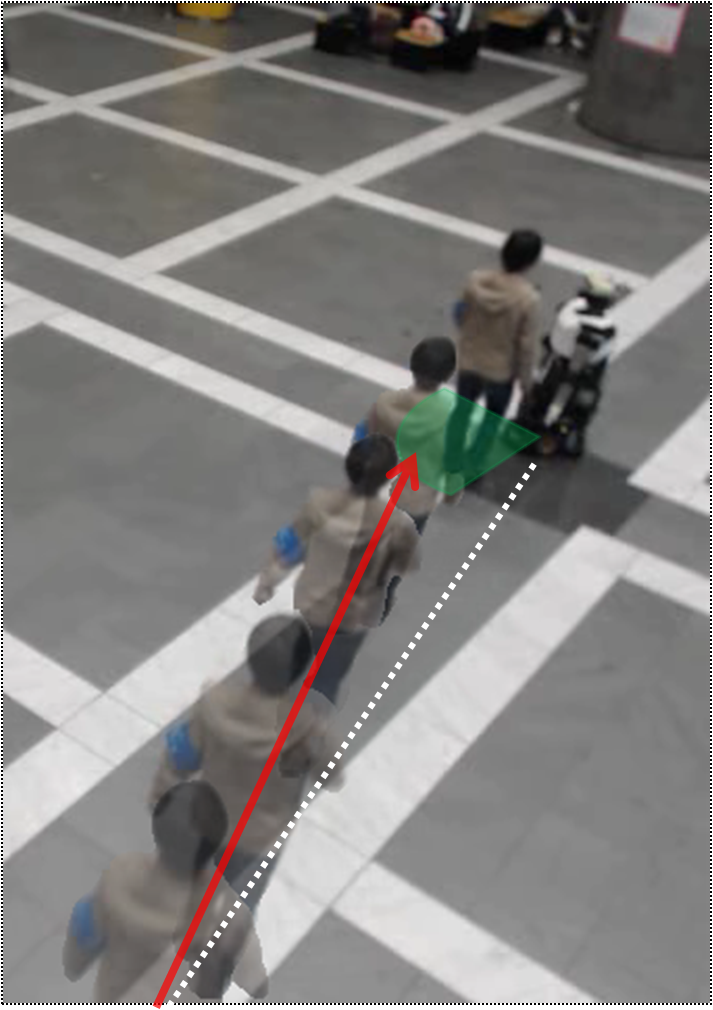

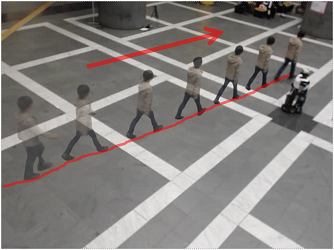

In this category people moved toward the robot, although not always exactly toward the location of the robot. |

|

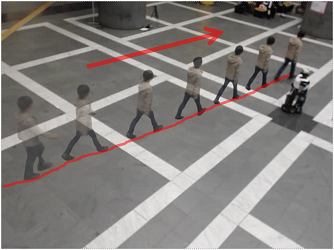

In this category people moved in a different direction. |

Some people intially walked toward some other direction and changed their course toward the robot. |

|

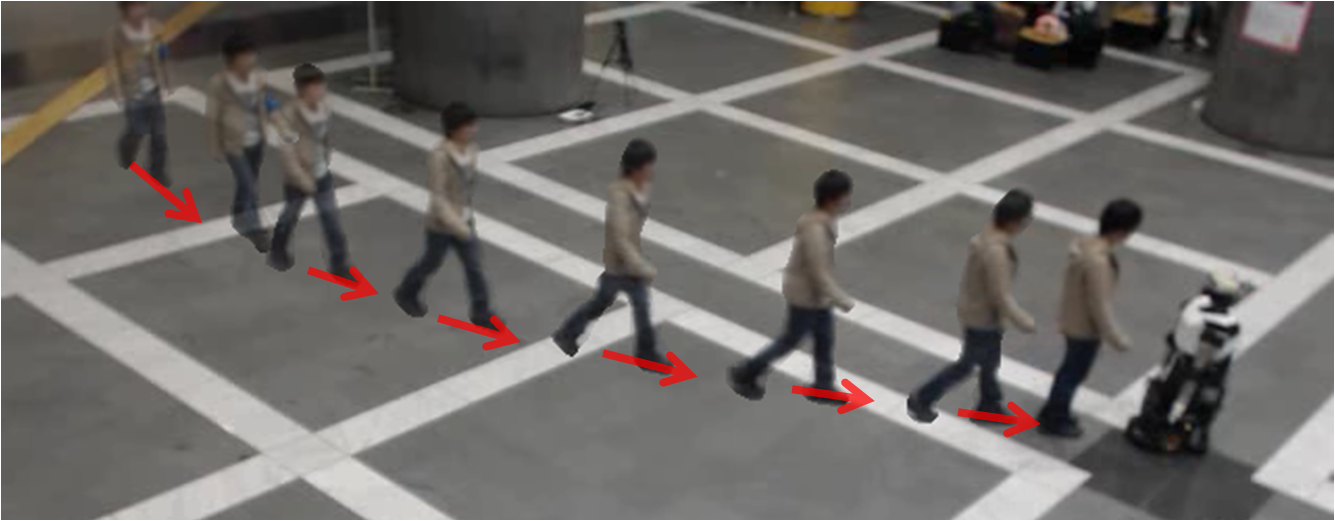

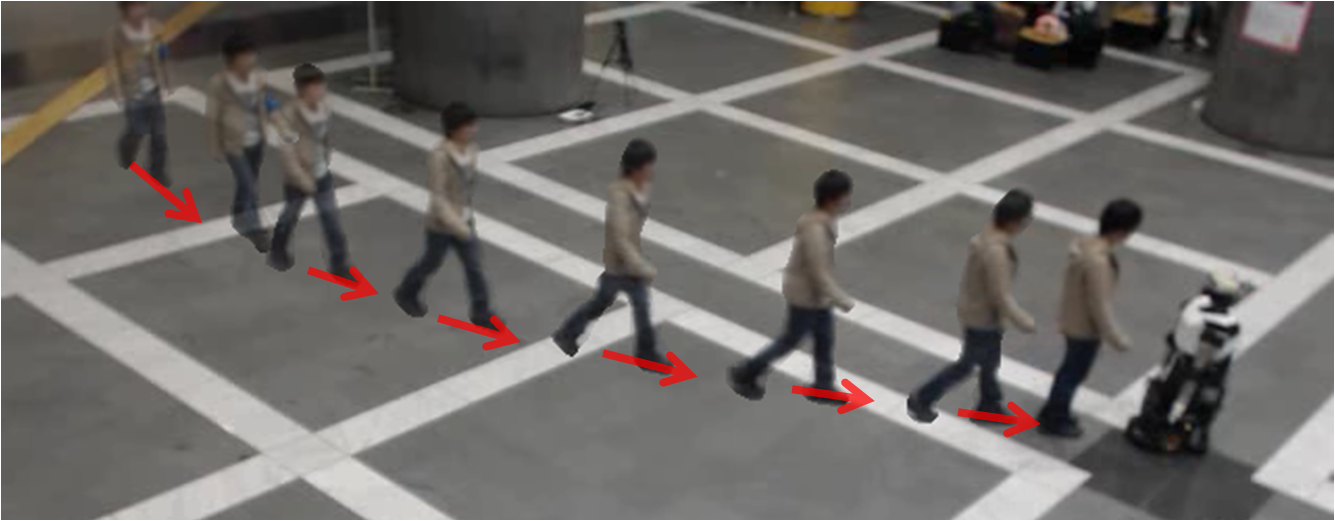

Some people moved in some other direction but the robot approached them, after which they started a conversation. |

(Note: for privacy reasons that above scenes are not with real pedestrians, but reproductions done by the experimenter.)

Dataset

We collected 63 trajectories for Intention to interact, and 67 for Other distinctive intention.

All trajectories are 10 seconds long, ending either when the pedestrian reached the nearest point to the robot or at the moment when they started a conversation.

The data was taken in a large hall of the shopping mall.

To consider the influence of the robot’s motion, we prepared two settings:

Static: The robot stayed in the center of the hall at all times. It only spoke when a visitor initiated interaction with it.

Simply-Proactive: The robot moved toward any visitor who entered the hall. When the distance to the visitor was within 2 m and the visitor was in front of the robot (within 60 degrees), the robot started speaking to her.

The data are provided as CSV files (compressed as zip). Each row corresponds to a single tracked person at a single instant, and it contains the following fields:

time [s] (unixtime + milliseconds/1000), head-count, person id, type (human=0, robot = 1), position x [mm], position y [mm], position z (height) [mm], velocity [mm/s], angle of motion [rad], facing angle [rad] (the last three fields (head, unique id, options) are not used)

Dataset file [23.3 MB] (We updated the dataset file on 2016.3.24. The data for id "12090402" in the previous file was wrong.)

The folder named "IntentionToInteract" includes trajectories of persons who intend to seek help and/or initiate interaction with robot. The folder named "OtherDistinctiveIntention" includes trajectories of persons who apparently DO NOT intend to seek help or initiate interaction with robot.

Each csv file's name is "dataset_{person id}". The person with {person id} is the target person. In all files the robot's id is 3.

For more details about the tracking system and the corresponding dataset see here.

License

The datasets are free to use for research purposes only.

In case you use the datasets in your work please be sure to cite the reference below.

Reference

Y. Kato, T. Kanda, H. Ishiguro, "May I help you? - Design of human-like polite approaching behavior", ACM/IEEE International Conference on Human-Robot Interaction (HRI 2015), pp. 35-42, 2015

Inquiries and feedback

For any questions concerning the datasets please contact: